| Version 1 (modified by bennylp, 17 years ago) (diff) |

|---|

Understanding Media Flow

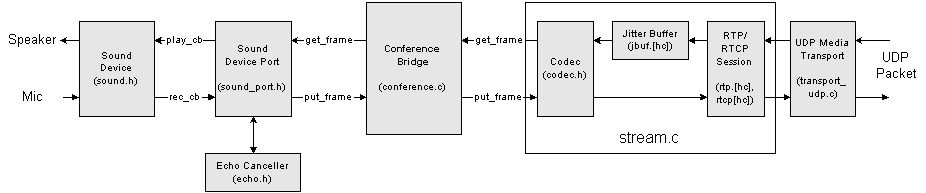

The diagram below shows the media interconnection of multiple parts in a typical call:

Media Timing

The whole media flow is driven by timing of the sound device, especially the playback callback:

- when the sound device needs another frame to be played to the speaker, the sound device abstraction will call play_cb() callback that was registered to the sound device when it was created.

- the sound device port translates this play_cb() callback into pjmedia_port_get_frame() call to its downstream port, which in this case is a Conference Bridge.

- a pjmedia_port_get_frame() call to the conference bridge will trigger it to call another pjmedia_port_get_frame() for all ports in the conference bridge, mix the signal together where necessary, and deliver the mixed signal by calling pjmedia_port_put_frame() again for all ports in the bridge. After the bridge finishes processing all of these, it will then return the mixed signal for slot zero back to the original pjmedia_port_get_frame() call, which then will be processed by the sound device.

- a pjmedia_port_get_frame() call by conference bridge to a media stream port will cause it to pick one frame from the jitter buffer, decode the frame using the configured codec (or apply Packet Lost Concealment/PLC if frame is lost), and return the PCM frame to the caller. Note that the jitter buffer is filled-in by other thread (the thread that polls the network sockets), and will be described in later section below.

- a pjmedia_port_put_frame() call by conference bridge to a media stream port will cause it to encode the PCM frame with a codec that was configured to the stream, pack it into RTP packet with its RTP session, update RTCP session, schedule RTCP transmission, and deliver the RTP/RTCP packets to the underlying media transport that was previously attached to the stream. The media transport then sends the RTP/RTCP packet to the network.

![(please configure the [header_logo] section in trac.ini)](/repos/chrome/site/pj.jpg)